Off-Topic: The Agile Manifesto, or How to Become Google

I’m not one of the best students of Agile methodologies out there, not even close. So I’ll allow myself the luxury of following perhaps a “naïve” view of what I personally see in this subject. And I know, I know — the word “Google” in the title is more for attention-grabbing. I’ll explain at the end ;-)

First of all, I want to separate two things: methodology and philosophy. The most relevant part is always the philosophy. If a company or professional hasn’t absorbed the Agile philosophy, they’ll rarely be truly Agile no matter how many methodology procedures they implement. You can read recipes for French dishes, but until you understand how a real French chef thinks, until you absorb the French culture, you’ll never have decent French cuisine — just low-quality mechanical copies.

What matters isn’t the “how” but the “why.” The Agile Manifesto says this right in its first value. Let’s recall the four Agile values:

We are uncovering better ways of developing software by doing it and helping others do it. Through this work we have come to value:

- Individuals and interactions over processes and tools

- Working software over comprehensive documentation

- Customer collaboration over contract negotiation

- Responding to change over following a plan

That is, while there is value in the items on the right, we value the items on the left more.

The first value already says it all: individuals over processes.

In this article I want to show why the vast majority of companies are not effectively Agile, even when they implement Agile “methodologies.”

Principles Behind the Agile Manifesto

To recap, it will be useful to cite the 12 Principles behind the Manifesto of Values. Many people have read all these items. But “reading” and “understanding” are two completely different things. For purposes of my explanation, I’ll put some keywords in bold to refer back to later.

We follow these principles:

Our highest priority is to satisfy the customer through early and continuous delivery of valuable software.

Welcome changing requirements, even late in development. Agile processes harness change for the customer’s competitive advantage.

Deliver working software frequently, from a couple of weeks to a couple of months, with a preference for the shorter timescale.

Business people and developers must work together daily throughout the project.

Build projects around motivated individuals. Give them the environment and support they need, and trust them to get the job done.

The most efficient and effective method of conveying information to and within a development team is face-to-face conversation.

Working software is the primary measure of progress.

Agile processes promote sustainable development. The sponsors, developers, and users should be able to maintain a constant pace indefinitely.

Continuous attention to technical excellence and good design enhances agility.

Simplicity — the art of maximizing the amount of work not done — is essential.

The best architectures, requirements, and designs emerge from self-organizing teams.

At regular intervals, the team reflects on how to become more effective, then adjusts its behavior accordingly.

The Fifth Element

“Build projects around motivated individuals. Give them the environment and support they need, and trust them to get the job done.”

I honestly don’t know how the Manifesto’s founders arrived at these principles, but based on this point alone I imagine they must be truly very experienced. This element, for me, is the densest of all the Principles.

Corollaries to the Fifth Element are the 11th and 12th Elements. I’ll explain why.

Scaling Agility

Recently I cited a chapter from the book Scaling Lean and Agile Development: Successful Large, Multisite and Offshore Products with Large-Scale Scrum. I believe many people didn’t have the patience to read it, so I’ll summarize the part that interests me.

This PDF discusses the differences between Feature Teams and Component Teams. Simplified, an Agile team is necessarily a Feature Team — a team that is as independent as possible and takes ownership of a complete product or feature of a product, from start to finish, from requirements to customer contact.

A Component Team is the traditional, departmental style. Each team is responsible only for a segment of various products. Interface team, infrastructure team, architecture team, visual components team, database team, quality team, and so on.

A Feature Team is a cross-functional team, typically formed by generalists. A Component Team is a limited team, typically formed by specialists. A Scrum Team is, by definition, a Feature Team capable of carrying out all work on a Product Backlog item.

As I said, forget the “how” for now.

Conway’s Law

Melvin Conway, in April 1968, wrote a paper where one excerpt would enter the annals of computing history:

“Any organization that designs a system (broadly defined) will produce a design whose structure is a copy of the organization’s communication structure.”

As explained on his site, the famous Frederick Brooks cited this paper and its idea in the classic (that every tech professional should read) The Mythical Man Month, calling the idea Conway’s Law. Brooks recognized that the law had important corollaries in management theory. Here’s one of his statements:

“Since the first design found is never the best, the system concept may need to change. Therefore, the flexibility of the organization is important to effective design.”

As I’ve already said in Killing the Average, we still apply Gaussian methodologies and processes today. I still haven’t managed to write more complete material explaining this, so watch the video I recorded and read the reference materials I point to there. For now, just understand that the vast majority of what we know as uncontested truths of Organization Theory no longer applies.

These outdated theories — which delineate rigid chains of command, various “feudal” departments, cultures based on position and political power — were essentially made to give great degrees of control to section heads in 19th-century factory assembly lines, where the maximum expected of a worker was tightening screws, not executing work requiring much brain activity.

As Conway’s Law says: teams tend to create structures that mirror the structure of the organization. The corollary: have a company that incentivizes mediocre work and your teams will be mediocre. As I was talking about the Fifth Element: "…give them the environment and support they need…"

The PDF from Larman mentions Brad Silverberg, Senior VP of Windows and Office, who emphasized:

“Software tends to reflect the structure of the organization that built it. If you have a large, slow organization, you tend to build large, slow software.”

An ironic statement, but true.

Generalists vs Specialists

Traditional companies that delude themselves thinking they can achieve total control over their teams only succeed in creating unproductive, attitude-less, perseverance-free, complacent, limited employees who will never learn anything new.

This is because the Total Control style prefers division of labor, as in factory assembly lines, where each team does a part of the whole. In this management style, the incentive for Specialists reigns.

Specialists are those employees who typically started working on a certain piece of the system and only they know how that piece works. They don’t feel very comfortable sharing that knowledge and feel even less comfortable accepting external changes. They tend to be treated as heroes because when there’s urgency, there’s no time for another person to learn about that piece and only they can solve the problem.

If you have heroes of this type in your company, they should be the first to be let go.

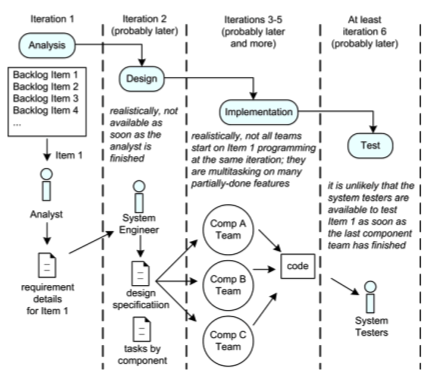

It’s no coincidence that Scrum teams are, by definition, Feature Teams. Otherwise the first effect that emerges is this:

Recognize this? Each Component Team does its Sprint, its iteration. Only when one team’s iteration ends can the next one begin. Precisely because none of the teams controls the complete Feature and depends on pieces from the previous ones.

This has a name: welcome back to Waterfall! It doesn’t matter if people call it a mini-waterfall — a cascade is a cascade, regardless of its size.

Other major side effects of Component Teams: since nobody effectively owns the product, none of the teams feels responsible for the whole, only for their part. The behaviors of “I did my part, the other team is at fault” naturally emerge.

Furthermore, Component Teams, by their very nature of dealing with only one type of problem, force their members to become specialists. And in doing so, force these professionals to limit their knowledge, giving absolutely no motivation to learn new things.

Remember the Fifth Element? “Build projects around motivated individuals…” Where are the motivated individuals in an organization that forces everyone toward mediocrity? Of course, this doesn’t mean everyone needs to know everything about everything — there will always be one or more disciplines where each professional fits best. But all should be encouraged to know a little of everything else. That’s exactly why Feature Teams are important: you have several specialists where each knows a little of the different things their colleague next to them knows how to do. And with this, Pair Programming takes on new meaning.

Furthermore, Test Driven Development starts making much more sense if the team is truly responsible for a product/feature that adds real value to the end customer. Once there’s no interdependency between teams it becomes much clearer how to perform complete testing. But there’s another question about testing I’ll explain further below.

Self-Organization

This is the crucial point in the new organizational theories. Don Tapscott and Anthony D. Williams would call it Wikinomics — the economics of collaboration.

Traditional organizations are horrified by chaos and therefore pursue absolute control in a pathological way, sufficiently to be harmful.

And they should indeed be horrified by chaos. But what they need to understand is that there is the phenomenon of order emerging from chaos.

We’re accustomed to thinking of isolated events, of results based on the sum of independent events. Put a spoonful of sugar and the tea gets sweet. Put two spoonfuls of sugar and the tea gets twice as sweet.

However, dynamic systems cannot be defined this way. Certain systems are very sensitive to initial conditions, giving non-linear results. In particular, natural phenomena like social relationships, food chains, economic events, are all non-linear systems.

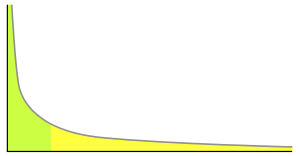

This returns to my talk about Power Law Distributions, or Pareto Distributions. To recap: a Platonic and linear world can be modeled according to Gauss. This type of distribution is extremely comfortable for analysts because it has a defined mean and constant, stable standard deviation. Power Laws, in turn, are characterized by the absence of a mean and standard deviation that tends toward infinity!

The most obvious point is that “Bell Curves” (normal/Gaussian curves) require independent, isolated events, like rolling dice or flipping an unbiased coin. It’s not hard to see, for example, that human behavior can be anything but independent: by definition, humans relate to each other, so we form highly dependent systems.

But something important about dynamic networks is that, contrary to what was imagined, they don’t form networks with random connections — whose distribution would be Normal — but instead exhibit Pareto distribution. Albert-László Barabási explains in more detail the formation of Scale-Free Networks.

As I was saying, if someone thinks about us (people, animals, neurons, viruses) and connections (friendship, transmission, synapse), it’s most natural to first imagine that nodes connect in a random and chaotic way. However, Barabási’s studies and detailed observations of natural phenomena led to the conclusion that they tend to form scale-free networks, whose node distribution follows Pareto — meaning few nodes concentrate the vast majority of connections and many nodes share the few remaining connections, forming something like this:

For those of us in technology, this would look like a representation of the Internet, where the nodes are websites and the connections are, literally, the links between them. And that’s exactly right: the Internet follows a Pareto distribution.

For those with socialist tendencies, forget Marx — he was obviously wrong to be inspired by the Gaussian curve and try to level all of society to the average. The thinking of “taking from the rich to give to the poor” is completely anti-natural. The natural is exactly the opposite: a few people will always hold the vast majority of the world’s wealth, while most people have less. The only way for the poor to become wealthy is to make the entire system wealthier, including the wealthy themselves. Nature always privileges meritocracy, never mediocrity.

Assuming everyone studies a little more about Barabási, Poincaré, Mandelbrot, Zipf, Pareto, Bak and subjects like scale-free networks, power laws, self-organized criticality, phase transition, chaos, fractals, we’ll quickly conclude: order does indeed emerge from chaos, networks form as Barabási described, through mechanisms like preferential attachment, and in the end we’ll have scale-free, self-organized networks.

80/20

Pareto’s famous 80/20 rule didn’t come from nowhere. In a study in Italy many years ago, Wilfredo Pareto found that 80% of Italian territory was in the hands of no more than 20% of the population. Hence “80/20.”

This division is exactly what a Pareto distribution shows, as in the figure below:

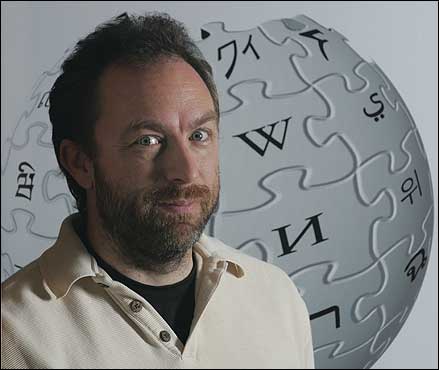

As Chris Anderson explains in his book The Long Tail, think about the famous Wikipedia. At the time of his book’s publication in 2006, Wikipedia already had 860,000 articles, versus 80,000 for the Encyclopædia Britannica.

Jimmy Wales’s idea was bold and quite controversial, despite the good intention of providing a rich, extensive, free encyclopedia to all the world’s people, including poor children in underdeveloped countries who would otherwise perhaps never have access to information.

Wales started the Nupedia project in 2000, with only a few entries and an idea that had actually become popular before that, with Linus Torvalds and his Linux: create a totally open platform (free as in freedom) where anyone who wanted could contribute, review, refine.

Looking back in 2008, everyone would say that Jimbo (as he’s known) is a genius. But in the year 2000 he was considered crazy. When we see things in retrospect it’s always much simpler to create a narrative that perfectly fits the events that already happened. As Nassim Nicholas Taleb would say: after a Black Swan happens, it’s easy to explain it, but before it happens it’s impossible to predict it.

Following Torvalds’s example, Jimbo created an environment suitable for collaborators. He was able to motivate people and, most importantly, trust them, because unlike the traditional editorial system, there would be no editors or filters: everything anyone typed would be immediately available. His hope was that gross errors would be quickly corrected by the collaborators themselves, just as Eric S. Raymond describes in the classic The Cathedral and the Bazaar:

“Given enough eyeballs, all bugs are shallow.”

Eric called this statement “Linus’s Law,” which can also be explained as “given enough beta-testers and co-developers, almost every problem will be found quickly and the fix will be obvious to someone.”

Wikipedia leveraged this same Law: in a dynamic, open system where collaborators begin participating slowly, evolving into a self-organized scale-free network, errors will happen, but most will be quickly corrected. The benefit of getting information from thousands of people spread across the world is orders of magnitude superior to the small defects that appear from time to time.

In a traditional control model, people tend to think like Britannica: fewer entries but all very accurate. Which is worth more: 80,000 entries with near-zero error rate, or nearly 1 million entries with a small error rate? Some retrograde critics still believe that 1 small error in Wikipedia invalidates 1 million major hits.

Software as Art

Pete McBreen wrote in 2001 about Software Craftsmanship and I’m a defender of that definition.

Many people think of Software, programming, as Engineering. I’m sorry to inform them it isn’t. Software is music. Software development is very close to composing music.

Software is painting. Developing Software is like painting a picture. Without wanting to diminish the field of engineering, which has already brought us incredible wonders around the world like the Great Wall of China and the Egyptian pyramids — in the specific case of software it’s far simpler to think of it as Engineering than as Art.

Again, the same reason: attempt at control, since engineering is predictable, controllable, measurable. Art is creative, rebellious, unpredictable, chaotic. I find it very interesting that many great figures of the past like Pythagoras and Da Vinci were great generalists, artists with much work in the fields of science and mathematics. That’s exactly what a software developer needs to be: a Renaissance artist.

As I said before, a member of a Feature Team has some specialties, but has an absolutely open mind to trying new things, learning new crafts, exploring and creating.

Art cannot be implemented just by reading procedures. And that’s exactly what many of those who call themselves “developers” or “programmers” do: they learn one (or a few) ways of doing things and continue doing it as they were taught. Artists learn from mentors, train tirelessly in a long process of trial and error, draw inspiration from the works of other masters, understanding their techniques and trying to blend them with their own.

Although not an absolute truth, I tend to think that software developers who actively participate in Open Source projects, like Linux, are much more complete programmers than programmers who graduated from university and went on to integrate “Component Teams” within traditional organizations — especially if they stayed too long in the same organization.

An expert programmer, member of a Component Team, in a traditional Gaussian organization, is exactly like a house painter: only knows how to roll the paint roller up and down, symmetrically, with no creativity whatsoever and absolutely no talent for learning and self-evolution.

80% of an employee’s professional profile directly reflects the organization where they work — the other 20% is the employee’s own fault for not accepting leaving their comfort zone. Both are 100% to blame for why only 29% of software projects are considered successes. Both are 100% to blame for the USD 55 billion spent on cancelled software projects. (source: Standish Chaos Reports)

The Open Source World

I believe there’s no need to explain much more about open source projects. There are no miracles: it doesn’t mean that just because a project is open source it will automatically be as successful as Linux. Quite the contrary: hundreds of projects never see the light of day.

Again, we’re talking about Pareto — perhaps only 20% of open source projects truly succeed on a large scale. However, the other 80% are identified as failures much more quickly, some merge into larger projects, some simply stop. The stop decision is much faster and more effective than in traditional corporate projects that have already invested resources (time and money, plus the reputation of some of those involved).

With everything I explained above, it becomes easy to understand that Open Source projects begin with simple initial conditions: an idea, a small implementation, a few people. It also starts to make sense to understand how they evolve from chaos to scale-free networks through self-organization.

It’s not hard to understand that these projects have no way of being implemented through very rigid prior consensus — they could only evolve into Feature Teams where collaborators typically have different and complementary skills.

It’s also not hard to understand that, as in Wikipedia, the most important and/or well-known entries are filled in first, then the more obscure ones are filled over time. In the best Pareto style, 20% of priorities happen first. In an environment where resources tend to be scarce (no physical presence, collaborators are volunteers, motivating people is even more important), the priorities — which deliver the most value to the group as a whole — are implemented first.

Understanding Software as Art also makes it simple to understand that developers who participate in various open source projects are automatically exposed to many different expressions of art, and as such to different ways of implementing software. A good developer will begin incorporating these differences into their own style, automatically greatly improving the quality of their work.

A developer, alone or in a familiar team, has very little motivation to create tests for their own code. But when they find themselves in a situation where they’re collaborating in a community where any stranger can see their code and therefore assess their reputation, the motivation to create quality code, decently covered with tests, becomes more obvious. That’s why I said earlier that Test-Driven Development not only starts making more sense but becomes a real necessity.

The project’s creator, the developer or group of programmers who started the project, will necessarily be forced to manage it. And in an open environment where people have no titles, no salaries, no bosses, no direct clients — any attempt to use Gaussian techniques of traditional project management will immediately fall flat. Now we’re talking about real projects, without the comfort zone of a cubicle. The project’s maintainer will find themselves in a position where they’ll be forced to make decisions. They’ll quickly understand that unanimity on every issue is impossible and will wear the hat of benevolent dictator — a dictator who, if too rigid and authoritarian, will drive away all their collaborators (who have no obligation to follow them), and if too flexible risks demonstrating insecurity, indecision, and sluggishness, potentially motivating a coup — a type of “state coup” where the project gets forked and a more charismatic and effective maintainer may take their place. Or worse: the project may simply stop and cease to exist.

There is no more hostile but at the same time more rewarding environment for a true project manager than open source projects. Take away a Manager’s title and power and only then can you assess whether they truly know what “managing” means.

Agile Principles, Redux

With all that said, I think we can revisit the 6th, 11th, and 12th principles again:

Build projects around motivated individuals. Give them the environment and support they need, and trust them to get the job done.

The best architectures, requirements, and designs emerge from self-organizing teams.

At regular intervals, the team reflects on how to become more effective, then adjusts its behavior accordingly.

The other principles are consequences:

Given an adequate environment, with professionals effectively raised above average, motivated, we can truly trust their capacities for self-organization, where the organic, non-hierarchical structure will naturally lead its members to readjust their routines according to the problems faced, leading them to generate quality code, where only the essential is truly being produced, with high quality, attention to refactoring, testing, continuous integration — which naturally leads to the best architectures — and the system as a whole feeds itself in a continuous positive feedback loop, creating a sustainable environment, always productive, with professionals researching and implementing technological innovations that from time to time give quality and productivity leaps for the company as a whole.

As a result, customers will be receiving products that add real value, changing requirements can effectively be accepted without major problems, since the organization is flexible and each member feels responsible for the whole. Furthermore, in this virtuous cycle, professionals are in constant learning, increasing their skills at an indefinite growing rate, generating an innovative company above the average, one that doesn’t rely on the past to try futilely to predict the future: they no longer need to, because the professionals are finally prepared for whatever future arrives. Instead of trying to predict the future, people will be capable for any future, and this is fundamental: constant changes no longer frighten them — on the contrary, they want changes.

How to Get There?

It was no accident that I wrote about:

- Killing the Average

- The Power of Myth, Redux

- Collaborating on Github

- Understanding Git and Installing Gitorious.

The first two articles focus on the professional figure: an attempt to wake up the clock-punching employees to the fact that the world isn’t static, the future isn’t stable, and the Gaussian world is an illusion.

The last two articles talk specifically about a tool: Git. It’s a hint for creating the environment that supports what a developer needs, as stated in the Fifth Principle. But tools, like methodologies, are useless if both company and employee don’t internalize the Agile Values and Principles.

Hence this article.

As I said at the start, I don’t consider myself any great scholar of this philosophy, but for some reason I identify with its foundations and clearly observe their application in practice in Open Source projects. It’s also clear that in Pareto’s world this model has not only survived but has borne impressive fruit — like Wikipedia, like the fact that over 60% of the world’s web servers run Apache, and so on.

You’re a company where software is part of your core business? Apply the “Open Source” model, or the “Bazaar” model, per Eric Raymond. Not necessarily opening your code to the general public on the internet, of course.

Create a simple repository that all employees have unrestricted access to, where the barrier to adoption is low. Encourage them to participate in projects outside their traditional departments. At first, poorly made code, low quality, no tests, and all kinds of defects will be revealed. But the objective isn’t to point fingers — it’s to break the vicious cycle that generates this kind of software.

“A bad programmer will write bad code, regardless of the language or tool you give them.” Therefore, the objective is to create excellent Programmers, not to change tools. Impressively, a good programmer will write good code even in ASP or Perl (again, without wanting to denigrate Perl, but just speaking to its reputation — created mainly by bad programmers).

Like a sculpture, it’s time to trim the rough edges, redo some pieces, reshape what doesn’t look right, and bring this work to truly become a piece of art, collaboratively. It’s the best way to avoid waste, since people with a little extra time in one team can help their colleagues who are more overloaded in another.

At first there will be disorder and signs of chaos. There will be duplication of work. Desynchronization and communication problems will happen (after all, nobody was accustomed to actually communicating). Skill and knowledge deficiencies will become obvious. All existing problems will come to the surface, and it will be ugly and uncomfortable.

However, if there’s just a bit of persistence and trust in people, you’ll see the group as a whole emerge from the chaos. The true leaders will emerge as hubs in the scale-free network. The preferential attachment phenomenon will begin to outline the order. With enough time, people will self-organize organically.

From there, yes — we can truly start talking about sustainable growth with a constant or growing productivity pace.

A Day of Innovation

At Google there’s that old story about how all employees have the right to one day a week to do whatever they want.

Put that way alone, the first thing that comes to mind is a bunch of kids riding bicycles, playing video games, sipping caipirinhas poolside on campus.

But assuming you’ve read my entire article, imagine Orkut Büyükkökten on one of those days. He has the right environment, the right culture, the right motivation, the right knowledge. He decides to start a personal project to experiment with social networking concepts.

He has a place to put his code. He also understands that he must detach himself from it. He naturally understands how collaboration in the “open source” style works. Because of this he knows how to communicate.

He shares his project internally. Team members — and members of other teams, all also tuned into the right culture of proactivity, innovation, acceptance of change, and collaboration — immediately understand the value of the idea. More than that: they know where to download the code and how to start collaborating.

I don’t really know how Google works — I’ve never worked there and it probably has just as many problems as any normal company. Even so I fantasize that many of their products started this way: in a permissive environment, oriented toward innovation. It’s not enough just to hire PhDs from MIT or Stanford if there isn’t an adequate environment and culture to truly make them produce. Since Larry and Sergey had a beginning in this permissive open source world, I imagine they created an organization that follows exactly this model, even if it was instinctive.

Many companies want to be the next Google. But I want to remind them that it takes much more than comfortable sofas, foosball tables, video game rooms, and Japanese food restaurants inside the company to become a Google. That’s easy: just buy it.

What’s difficult is cultivating a culture. Many companies complain that high-quality professionals resign and look for other companies. Obvious: truly intelligent people don’t accept a Gaussian culture for very long. We don’t like the same old thing, we don’t like retrograde thinking and lack of attitude. True artists need inspiring environments to be creative.

Most companies’ cubicles are a terrible place to create.

Bibliography

Finally, once the philosophy is understood, we can return to the methodology. Now it makes sense to apply the tools that methodologies like XP or Scrum advocate: Pair Programming, Planning Game, Test Driven Development, Continuous Integration, Refactoring, Small Releases, Collective Code Ownership, Simple Design, Sustainable Pace, etc.

Read with fresh eyes my reading recommendations:

- Larman, Craig & Vodde, Bas – Scaling Lean & Agile Development: Thinking and Organizational Tools for Large-Scale Scrum

- Fowler, Chad – My Job Went to India: 52 Ways to Save Your Job

- Christensen, Clayton M. – The Innovator’s Dilemma: The Revolutionary Book that Will Change the Way You Do Business

- Beck, Kent – Extreme Programming Explained: Embrace Change

- Poppendieck, Mary – Lean Software Development: An Agile Toolkit

- Brooks, Frederick P. – The Mythical Man-Month: Essays on Software Engineering

- McBreen, Pete – Software Craftsmanship: The New Imperative

- Raymond, Eric S. – The Cathedral & the Bazaar: Musings on Linux and Open Source by an Accidental Revolutionary

- Don, Taps & Williams, Anthony D. – Wikinomics: How Mass Collaboration Changes Everything

- Anderson, Chris – Long Tail, The, Revised and Updated Edition: Why the Future of Business is Selling Less of More

- Gladwell, Malcolm – The Tipping Point: How Little Things Can Make a Big Difference

- Gladwell, Malcolm – Blink: The Power of Thinking Without Thinking

- Taleb, Nassim Nicholas – Fooled by Randomness: The Hidden Role of Chance in Life and in the Markets

- Taleb, Nassim Nicholas – The Black Swan: The Impact of the Highly Improbable

- Mandelbrot, Benoit – The Misbehavior of Markets: A Fractal View of Risk, Ruin & Reward

- Sagan, Carl – The Demon-Haunted World: Science as a Candle in the Dark

- Sagan, Carl – Pale Blue Dot: A Vision of the Human Future in Space

- Dawkins, Richard – The Selfish Gene

Tip: Most of these books have Portuguese translations.